On-Device Voice Assistant

A self-contained voice assistant that runs entirely on a Jetson Orin Nano. No cloud APIs, no internet required during conversation. Speech recognition, language model, text-to-speech, and voice activity detection all run on the integrated GPU.

This started as a question: can you run a useful conversational agent on an 8 GB edge device with under 4-second response latency, and can you make it actually pleasant to talk to? The answer turned out to be yes, with caveats. This page documents the system, shows some demo recordings, and explains the design choices that kept it usable.

The primary use cases right now are structured voice surveys (where the flow of questions is deterministic and the LLM only provides natural phrasing) and freeform conversation (open-ended chat with optional note-taking). The system supports additional flow types and is designed to be extensible.

Demo

Survey Mode

The assistant runs a structured intake survey: fixed questions, fixed order, bounded LLM follow-up probes. The LLM adds natural phrasing but never controls the flow, never invents facts, and never generates the survey questions themselves.

Basic intake survey (~5 min). The assistant asks about comfort level, goals, prior experience, privacy expectations, and usefulness.

Freeform Mode

Open-ended conversation. The assistant greets first, then listens. The user can ask questions, take notes ("remember that my appointment is Tuesday"), or just talk. Memory is ephemeral by default, wiped at the start of each session.

Freeform conversation with note-taking. Response latency is typically 2.5–3.5 seconds.

Hardware

| Component | What | Why |

|---|---|---|

| Compute | NVIDIA Jetson Orin Nano (8 GB) | CUDA GPU for parallel LLM + TTS + VAD inference |

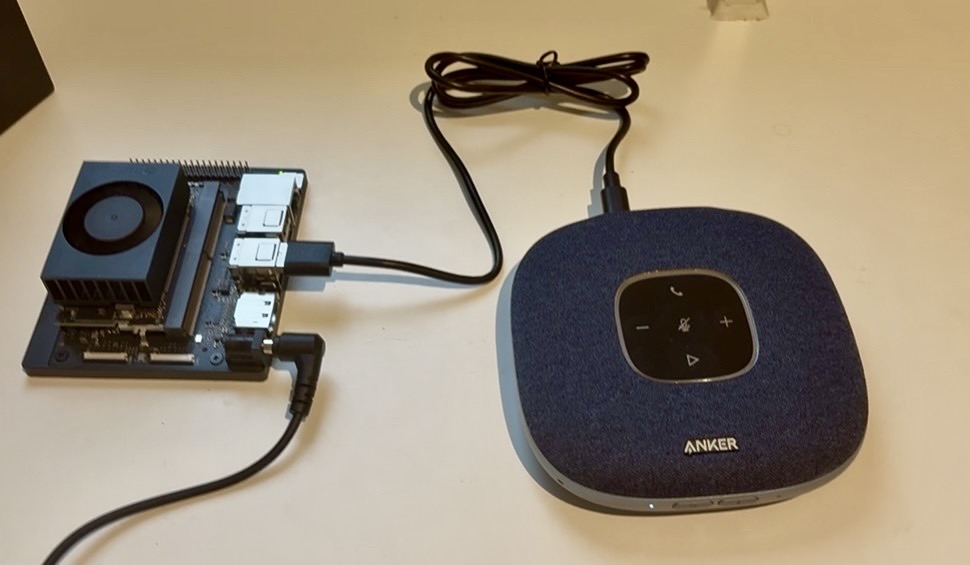

| Microphone & speaker | Anker PowerConf S3 | Hardware echo cancellation, full-duplex, USB |

| Storage | 128 GB microSD | Holds JetPack, models, runtime |

| Network | Any local network (Wi-Fi, USB-C Ethernet) | SSH access for control; not needed during conversation |

The full setup: Jetson Orin Nano, Anker PowerConf S3, power supply. No screen needed.

Architecture

The voice pipeline is a state machine: idle, listening, transcribing, thinking, speaking, and back to idle. Barge-in is supported: the microphone and VAD stay active during playback, so the user can interrupt at any time.

Audio pipeline. Each stage overlaps: TTS starts on the first LLM clause, not after the full reply.

| Stage | Engine | Runs on |

|---|---|---|

| Voice activity | Silero VAD (ONNX) | CUDA |

| Speech recognition | whisper-server (tiny.en, long-running) | CPU / CUDA |

| Language model | Ollama → qwen2.5:1.5b | CUDA, kept warm in VRAM |

| Text-to-speech | Kokoro v1.0 (ONNX, 24 kHz) | CUDA |

| Audio I/O | PortAudio / sounddevice | USB speakerphone |

Latency Budget

End-of-user-speech to first audio out, measured on warm qwen2.5:1.5b:

| Stage | Time |

|---|---|

| VAD silence detection | ~350 ms |

| Whisper ASR | ~0.8 s |

| LLM first chunk + Kokoro first clause | ~1.5–2.4 s |

| Speaker wake pad | ~0.3 s |

| Typical total | ~2.6–3.5 s |

Two choices keep this usable. First, the ASR server stays loaded in memory instead of cold-starting per utterance, which drops transcription from ~1.2 s to ~0.8 s. Second, TTS starts synthesizing the first clause (at the first comma) while the LLM is still generating the rest of the reply.

Structured Flows

The survey mode is built on deterministic YAML state machines. Each state

either speaks a fixed verbatim line or uses a

prompt_template to let the LLM phrase a bounded response.

Transitions are explicit. The LLM never decides what question comes next.

Basic intake survey flow. Solid boxes are fixed verbatim text; shaded boxes are bounded LLM probes.

A flow YAML file looks like this (shortened):

The key principle: fixed questions, bounded probes. The LLM adds warmth and follow-up phrasing. It does not control the order, invent facts, or decide when the survey ends. This is important for research contexts where you need reproducible instruments.

Other flows are straightforward to add. The system currently ships with a daily check-in, an evening reflection, a curiosity survey, and a vaccination consultation flow (following a CDC/WHO motivational interviewing framework). New flows are a YAML file and a one-line config change.

Control and Deployment

The system runs headless. No screen is needed on the Jetson. You SSH in from a laptop, set the boot flow, and start the supervisor. Logs stream to stdout and can be monitored in real time over SSH. A USB speakerphone play button can start and stop sessions without a terminal open.

Environment

Everything runs on the Jetson. The laptop is only used for setup, syncing code, and monitoring. No cloud service is involved during conversation.

Deployment environment. The laptop connects over Wi-Fi for setup; audio goes through USB.

Privacy posture: by default, all transcripts, semantic facts, and vector embeddings are wiped at the start of each session. Nothing accumulates on the device. This is configurable; persistent memory can be enabled for personal-assistant use cases.

Next Steps

The current system works, but there are several directions I want to explore:

If you are interested in using this for research or have ideas, get in touch.